Artificial Intelligence has already spawned off numerous concepts developing high-end technologies. There has been always a tussle between which language is appropriate for machine learning. Many companies have started deploying machine learning algorithms with the help of Python. Most of the data scientists believe Python is best suited for this purpose because of its flexibility and extensive libraries.

On the other side, the Lingua Franca of statistics and analytics, R Programming, has been ranked as the most preferred programming language to be used by Data Scientists. As it is very widely used by statisticians, leading e-learning companies like Intellipaat are providing Data Science Course with R programming. The programmers have all the reasons to opt for R programming as it is now being used for detecting emotions.

A yet new addition to the framework of R Programming!

R programming has a wide framework and the developers can incorporate various packages and APIs for performing various functions. The latest extension to this is detecting and analyzing emotions. However, the question is R able to detect facial expressions? Or text emotions?

Well if the popular blogs and articles are anything to be believed, R Programming is well-suited for both.The flexibility of R Programming allows the developers to install packages like Tidy or Syuzhet.

Tidy and Syuzhet- What are they?

The most critical issue in analysis is the cleaning of data, which is not just performed once, but many times over the course of analysis. As soon as new problem statements are added, new data is collected which requires cleaning.

Tidydata is a way of resolving this issue. Each dataset consists of a value and an observation and tidy data helps the structure of the data to link with its semantics. In this method, each variable forms a column, each observation forms a row, and every observation unit forms a table. This method helps the R to parse the text and analyze the emotional words, provided the lexicon contains the record of these.

There are three general-purpose lexicons, named AFINN, bing, and nrc, each containing different sets of emotion-related words. To check the sentiments of any lexicon, we can use get_sentiments () function like this:

get_sentiments(“nrc”)

## # A tibble: 13,901 × 2

## word sentiment

## <chr> <chr>

## 1 abacus trust

## 2 abandon fear

## 3 abandon negative

## 4 abandon sadness

## 5 abandoned anger

## 6 abandoned fear

## 7 abandoned negative

## 8 abandoned sadness

## 9 abandonment anger

## 10 abandonment fear

## # … with 13,891 more rows

If we want to check what are the happy words in any text of a novel say “Fault in our stars” written by John Green, then we will follow below given steps:

library(johngreen)

library(dplyr)

library(stringr)

tidy_books<- johngreen_books() %>%

group_by(book) %>%

mutate(linenumber = row_number(),

chapter = cumsum(str_detect(text, regex(“^chapter [\\divxlc]”,

ignore_case = TRUE)))) %>%

ungroup() %>%

unnest_tokens(word, text)

Now, in order to count the joy words in this novel we will use count () function. See following example:

nrcjoy<- get_sentiments(“nrc”) %>%

filter(sentiment == “joy”)

tidy_books %>%

filter(book == “faultinourstars”) %>%

inner_join(nrcjoy) %>%

count(word, sort = TRUE)

## # A tibble: 303 × 2

## word n

## <chr> <int>

## 1 good 359

## 2 young 192

## 3 friend 166

## 4 hope 143

## 5 happy 125

## 6 love 117

## 7 deal 92

## 8 found 92

## 9 present 89

## 10 kind 82

## # … with 293 more rows

It is visible how many joy words are there in this novel.

library(tidyr)

johngreensentiment<- tidy_books %>%

inner_join(get_sentiments(“bing”)) %>%

count(book, index = linenumber %/% 80, sentiment) %>%

spread(sentiment, n, fill = 0) %>%

mutate(sentiment = positive – negative)

Coming to Syuzhet, this package is equipped with a powerful sentiment extraction tool. In order to install this package, the coreNLP package should be installed which allows R programming environment to extract the sentiment from the text. This package includes various functions such as get_sentences, get_text_as_string, get_tokens, get_sentiment(), and get_nrc_sentiment,that use the lexicon of emotions (anger, fear, anticipation, trust, surprise, sadness, joy, and disgust) and sentiments (negative and positive). These functions when implemented help the developers extract the mood of any text when then can be analyzed by plotting the emotions on a chart. Look at the following code:

library(syuzhet)

my_example_text<- “This is an emotion detection test and here I will be writing different short sentences with different emotions.

It is very good to know that I am promoted.

I am not feeling good as I am having fever.

I was angry yesterday as my boss scolded me.

Yesterday was stressful due to huge workload.

This dress is beautiful and I want to buy it for the upcoming function.”

s_v<- get_sentences(my_example_text)

poa_word_v<- get_tokens(joyces_portrait, pattern = “\\W”)

# get_tokens tokenizes the words instead of sentences.

syuzhet_vector<- get_sentiment(poa_v, method=”syuzhet”)

# OR if using the word token vector from above

# syuzhet_vector<- get_sentiment(poa_word_v, method=”syuzhet”)

Here we have got the numbers as to how many sentences are there and what are the sentiments in those.

head(syuzhet_vector)

However different functions fetch different outputs because of varying scales used. Now we will use sign function which converts the sentiments into numbers and assigns the positive emotion to 1, and negative emotions to -1.

rbind(

sign(head(syuzhet_vector)),

sign(head(bing_vector)),

sign(head(afinn_vector)),

sign(head(nrc_vector))

)

Detecting facial expressions using R Prgramming? How?

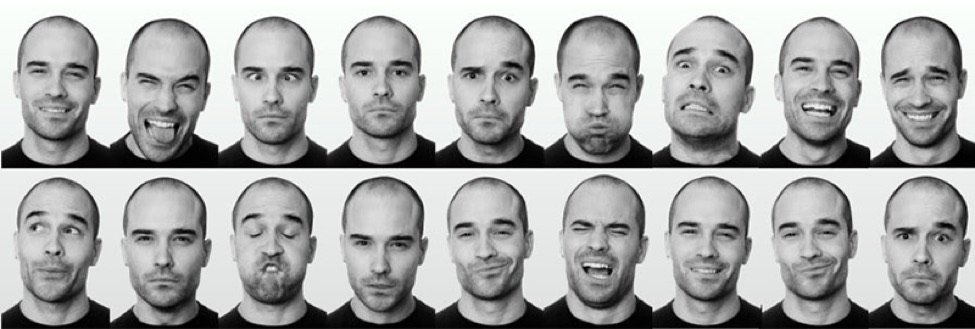

Having discussed the mood of text, we move on to the facial expressions and its detection using R programming. Well installing the Face API lets the R programming environment to take the input in the form of an image and fetches the output in the form of its mood. Not only this, but R Programming is also able to identify the emotions in the videos.

For this you need to use the Emotion API along with the httr package which fetches the results in the R data frame. With the data being accumulated, graphs can be plotted to analyze the expressions and their percentage.

Needless to say, the programming environment provided by R is propelling the field of Data Scienceand soon it will be seen implemented in various e-commerce operations, media and advertising because of its wide applications.

Author Bio:

Sonal Maheshwari has 6 years of corporate experience in various technology platforms such as Big Data, Data Science, Salesforce, Digital Marketing, CRM, SQL, JAVA, Oracle, etc. She has worked for MNCs like Wenger & Watson Inc, CMC LIMITED, EXL Services Ltd., and Cognizant. She is a technology nerd and loves contributing to various open platforms through blogging. She is currently in association with a leading professional training provider, Intellipaat Software Solutions and strives to provide knowledge to aspirants and professionals through personal blogs, research, and innovative ideas.